Maximum likelihood estimation (MLE) 是機器學習 (ML) 中許多模型優化的目標函式

應該是大家學習 ML 一開始就接觸的內容, 但其實它可能比你想的還複雜

本文分兩大段落:

A. Maximum Likelihood Estimation (MLE):

簡單說明 MLE 後, 點出實務上會遇到的問題, 然後與 mean square error (MSE) 和 KL divergence 的關聯

B. 生成模型想學什麼:

先說明生成模型的設定, 然後帶到有隱變量的 MLE

最後點出 VAE, Diffusion (DDPM), GAN, Flow-based 和 Continuous Normalizing Flow (CNF) 這些生成模型與 MLE 的關聯

讀 Flow Matching 前要先理解的東西

下一篇: 嘗試理解 Flow Matching

如果你跟我一樣看 flow matching 論文時, 在還在說明預備知識的地方就陣亡了, 別擔心你不孤單! 我也死在那裡.

論文裡很多地方用到了 Continuity Equation 來推導. 這個定理也相當於是一個核心概念.

抱持一定要了解清楚的想法, 結果是學習到了美妙的 Gauss’s Divergence Theorem 和 Continuity Equation!

通了! 通暢了! …. 我指任督二脈

🎉 也剛好這是本部落格第 100 篇的文章, 就決定用這個主題了! 🎉

讓我們進入這美妙的 “物理 feat 機器學習 (Physics x ML)” 的世界吧!

(本文用的圖檔: div_fig.drawio)

我們分成幾大段落來說明:

A. Vector Field

B. Divergence (散度)

C. Gauss’s Divergence Theorem

D. Mass Conservation or Continuity Equation

E. 跟 Flow Matching 有啥關聯?

高維度的常態分佈長得像...蛋殼?

隱變量的內插

還記得經典的 word embedding 特性嗎? 在當時 Mikolov 這篇經典的 word2vec 論文可讓人震驚了

$$\mathbf{e}_{king}+(\mathbf{e}_{man}-\mathbf{e}_{woman})\approx\mathbf{e}_{queen}$$ 這樣看起來 embedding space 似乎滿足某些線性特性, 使得我們後來在做 embedding 的內插往往採用線性內插:

$$\mathbf{e}_{new}=t\mathbf{e}_1+(1-t)\mathbf{e}_2$$ 讓我們把話題轉到 generative model 上面

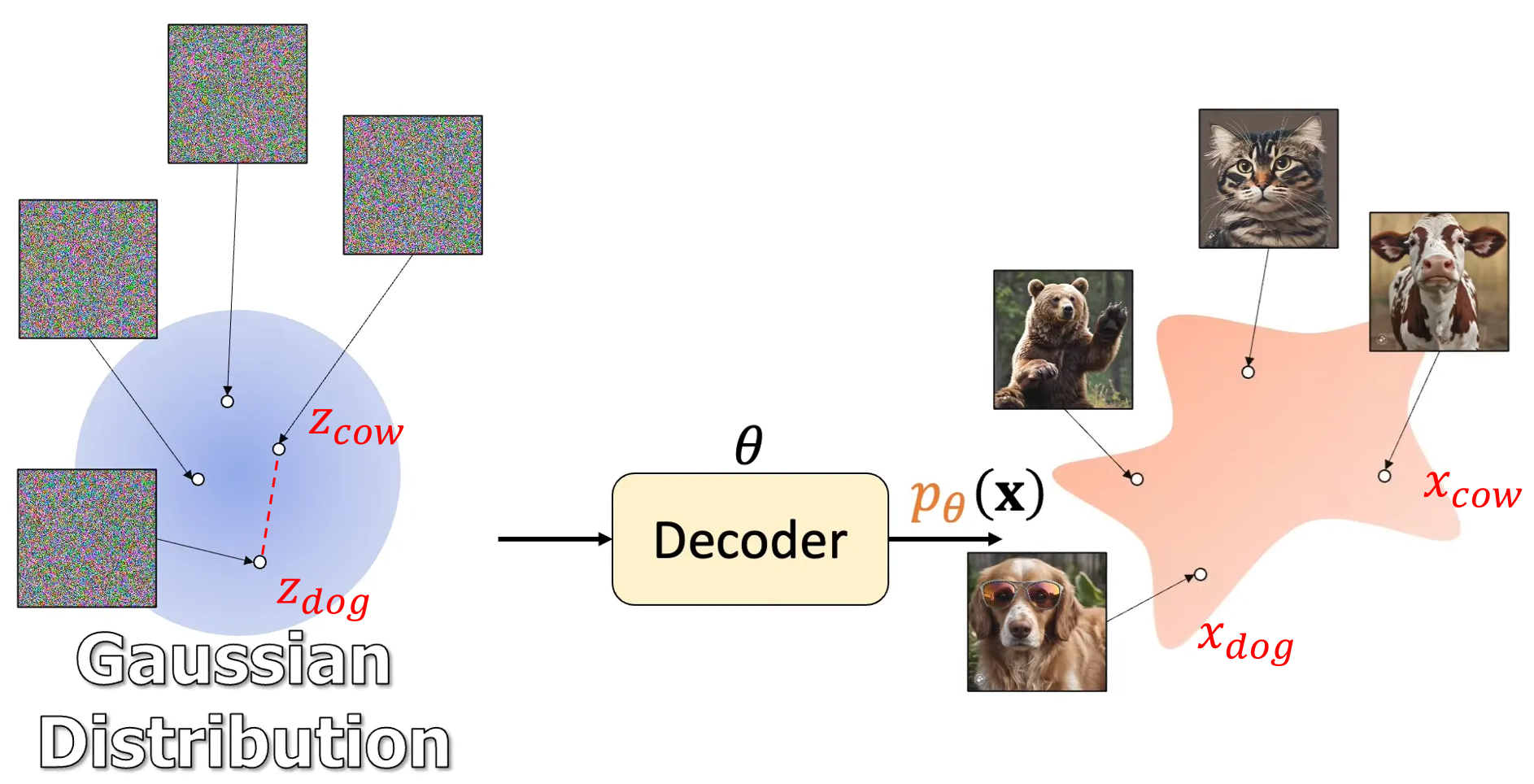

不管是 VAE, GAN, flow-based, diffusion-based or flow matching models 都是利用 NN 學習如何從一個”簡單容易採樣”的分布, e.g. standard normal distribution $\mathcal{N}(\mathbf{0},\mathbf{I})$, 到資料分布 $p_{data}$ 的一個過程.

$$\mathbf{x}=\text{Decoder}_\theta(\mathbf{z}),\quad \mathbf{z}\sim\mathcal{N}(\mathbf{0},\mathbf{I})$$

Lil’Log “What are Diffusion Models?” Fig. 1. 的 variable $\mathbf{z}$ 實務上大多使用 $\mathcal{N}(\mathbf{0},\mathbf{I})$ 的設定

(借用 Jia-Bin Huang 影片的圖 How I Understand Diffusion Models 來舉例)

因此如果我們想產生狗和牛的混合體, 是不是可以這樣作?

$$\text{Decoder}_\theta(t\mathbf{z}_{dog}+(1-t)\mathbf{z}_{cow}), \quad t\in[0,1]$$ 答案是不行, 效果不好. 那具體怎麼做呢? 其實應該這麼做 [1]

$$\text{Decoder}_\theta(\cos(t\pi/2)\mathbf{z}_{dog}+\sin(t\pi/2)\mathbf{z}_{cow}), \quad t\in[0,1]$$ 要使用 spherical linear interpolation [2] 這種內插方式

整理隨機過程的連續性、微分、積分和Brownian Motion

Coursera Stochastic Processes 課程筆記, 共十篇:

- Week 0: 一些預備知識

- Week 1: Introduction & Renewal processes

- Week 2: Poisson Processes

- Week3: Markov Chains

- Week 4: Gaussian Processes

- Week 5: Stationarity and Linear filters

- Week 6: Ergodicity, differentiability, continuity

- Week 7: Stochastic integration & Itô formula

- Week 8: Lévy processes

- 整理隨機過程的連續性、微分、積分和Brownian Motion (本文)

根據之前上的 Stochastic processes 課程, 針對以下幾點整理出各自的定義、充分或充要條件:

- 隨機過程的連續性 (Stochastic continuity)

- 隨機過程的微分 (Stochastic differentiability)

- 隨機過程的積分 (Stochasitc Integral)

- Brownian Motion

每次過段時間都忘記, 查找起來也麻煩, 因此整理一篇

紀錄 Evidence Lower BOund (ELBO) 的三種用法

Maximal Log-likelihood 是很多模型訓練時目標函式. 在訓練時除了 observed data $x$ (蒐集到的 training data) 還會有無法觀測的 hidden variable $z$ (例如 VAE 中 encoder 的結果).

如何在有隱變量情況下做 MLE, Evidence Lower BOund (ELBO) 就是關鍵. 一般來說, 因為 ELBO 是 MLE 目標函式的 lower bound, 所以藉由最大化 ELBO 來盡可能最大化 likelihood. 另外這個過程也可以用來找出逼近後驗概率 $p(z|x)$ 的函式. 本文記錄了 ELBO 在 Variational Inference (VI), Expectation Maximization (EM) algorithm, 以及 Diffusion Model 三種設定下的不同用法.

數學比較多, 開始吧!

開頭先賞一頓數學操作吃粗飽一下

Let $p,q$ 都是 distributions, 則下面數學式子成立

$$\log p(x)= KL(q(z)\|p(z|x))+ {\color{orange}{ \mathbb{E}_{z\sim q}\left[\log \frac{p(x,z)}{q(z)}\right]} }\\ =KL(q(z)\|p(z|x))+{\color{orange}{ \mathbb{E}_{z\sim q}\left[\log p(x,z) - \log q(z)\right]} } \\ =KL(q(z)\|p(z|x))+\color{orange}{\mathcal{L}(q)}$$ 因為 $KL(\cdot)\geq0$ 所以 $\mathcal{L(q)}\leq\log p(x)$

基本上 $p,q$ 只要是 distribution 上面式子就成立

Neural Architecture Search (NAS) 筆記

借用 MicroSoft NNI [1] 的分類, NAS 分成:

$\circ$ Multi-trail NAS

$\circ$ One-shot NAS (著重點)

我們著重在每個方法的介紹, 要解決什麼問題, 而實驗結果就不記錄

註: NAS 一直是很活躍的領域, 個人能力有限只能記錄自己的 study 現況

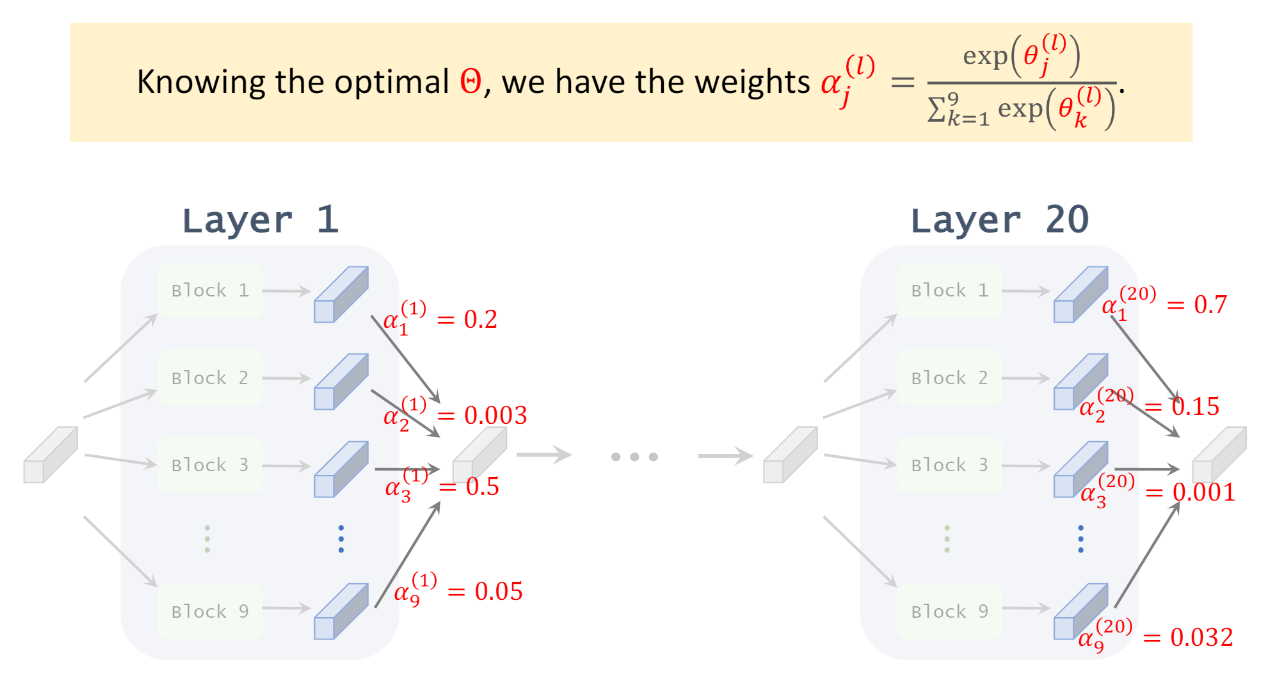

DARTS 經典論文閱讀 (數學推導和 Codes 對應)

在這篇之前的 NAS (Neural Architecture Search) 主流方法為 evolution or RL 在 discrete space 上搜尋, 雖然可以得到當時最佳的結果, 但搜索的 cost 很高.

這篇提出 DARTS (Differentiable ARchiTecture Search) 將 NAS 變成 continuous relaxation 的問題後, 就能套用 gradient-based optimize 方法來做 NAS. 因此比傳統方法快上一個 order. 雖然 gradient-based NAS 在這篇文章之前就有, 但是之前的方法沒辦法像 DARTS 一樣能套在各種不同的 architecture 上, 簡單講就是不夠 generalized.

核心想法是, 如果一個 layer 能包含多個 OPs (operations), 然後有個方法能找出最佳的 OP 應該是那些, 對每一層 layers 都這樣找我們就完成 NAS 了.

圖片來源, 或參考這個 Youtube 解說, 很清楚易懂 不過關鍵是怎麼找? 這樣聽起來似乎需要為每個 OPs 都配上對應可訓練的權重, 最後選擇權重大的那些 OPs? 以及怎麼訓練這些架構權重?

不過關鍵是怎麼找? 這樣聽起來似乎需要為每個 OPs 都配上對應可訓練的權重, 最後選擇權重大的那些 OPs? 以及怎麼訓練這些架構權重?

或這麼類比: 直接訓練一個很大的 super network, 根據 OP 對應的架構權重來選擇哪些 OPs 要留下來, 大概類似 model pruning 的想法

Model Generalization with Flat Optimum

訓練模型時我們盯著 tensorboard 看著 training loss 一直降低直到收斂, 收斂後每個 checkpoint 的 training loss 都差不多, 那該挑哪一個 checkpoint 呢?

就選 validation loss 最低的那些吧, 由 PAC 我們知道 validation error 約等於 test error (validation set 愈大愈好), 但我們能不能對泛化能力做得更好? 如果 training 時就能讓泛化能力提升, 是否更有效率?

Motivation

很多提升泛化能力的論文和觀點都從 “flat“ optimum 出發. 下圖清楚說明這個想法 ([圖來源]):

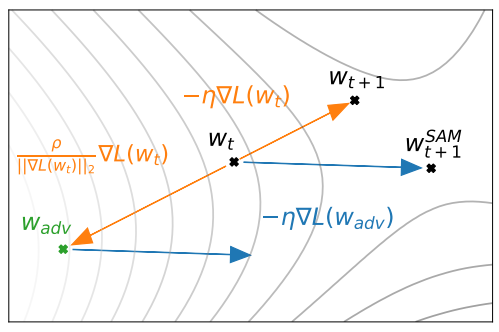

Sharpness-Aware Minimization (SAM) 論文閱讀筆記

直接看 SAM 怎麼 update parameters, 論文的 figure 2: 目前的 weight $w_t$ 的 gradient step 為 $-\eta\nabla L(w_t)$, update 後會跑到 $w_{t+1}$.

目前的 weight $w_t$ 的 gradient step 為 $-\eta\nabla L(w_t)$, update 後會跑到 $w_{t+1}$.

SAM 會考慮 $w_t$ locally loss 最大的那個位置 ($w_{adv}$), 用該位置的 gradient descent vector $-\eta\nabla L(w_{adv})$, 當作 weight $w_t$ 的 gradient step, 因此才會跑到 $w_{t+1}^{SAM}$.

先把 SAM 的 objective function 主要目的點出來, SAM 相當於希望找出來的 $w$ 其 locally 最大的 loss 都要很小, 直覺上就是希望 $w$ 附近都很平坦, 有點類似 Support Vector Machine (SVM) 的想法, 最小化最大的 loss.

以下數學推導… 數學多請服用

Introduction of Probably Approximately Correct (PAC) 林軒田課程筆記

這是林軒田教授在 Coursera 機器學習基石上 (Machine Learning Foundations)—Mathematical Foundations Week4 的課程筆記.

說明了為什麼我們用 training data 學出來的 model 可以對沒看過的 data 有泛化能力, 因此機器學習才有可能真正應用上.

課程單元的這句話總結得很好 “learning can be probably approximately correct when given enough statistical data and finite number of hypotheses”

以下為筆記內容